A “COMPAS” That's Pointing in the Wrong Direction – Data Science W231 | Behind the Data: Humans and Values

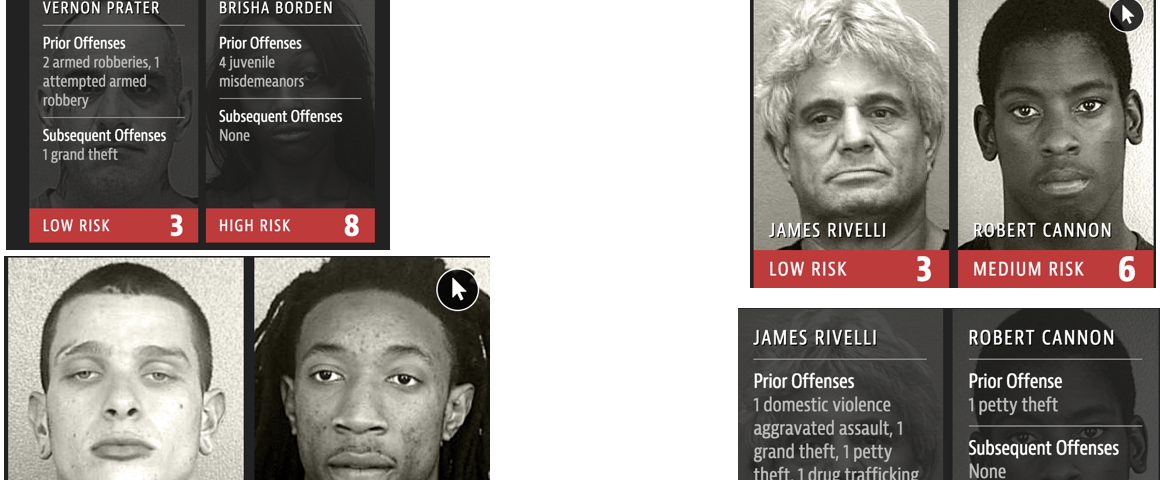

Rachel Thomas on Twitter: "@harini824 The Compas Recidivism Algorithm: - it's no more accurate than random people (Amazon Mechanical Turk) - it's a black box with 137 inputs but no more accurate

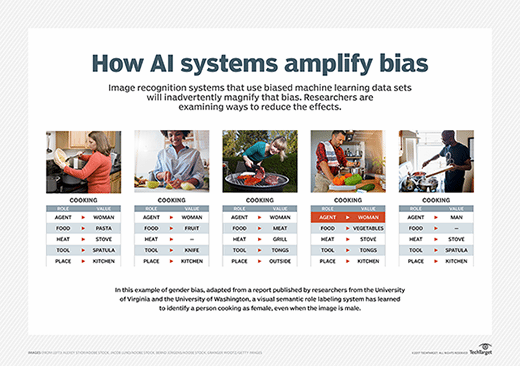

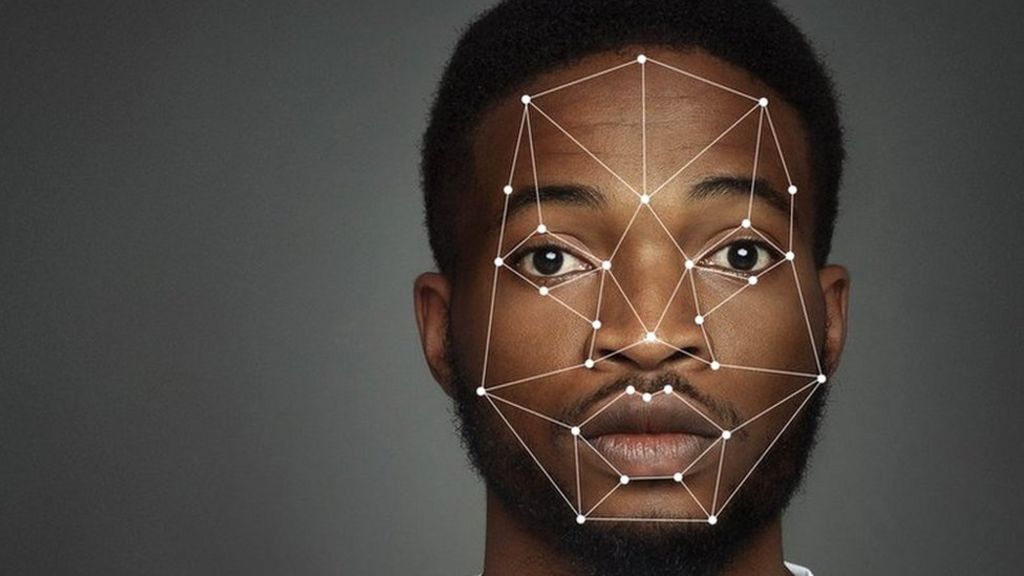

Racial Bias and Gender Bias Examples in AI systems - Adolfo Eliazàt - Artificial Intelligence - AI News

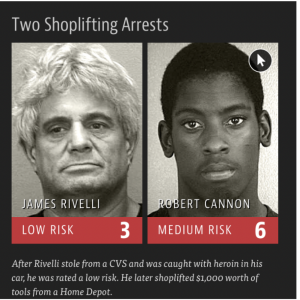

Rachel Thomas on Twitter: "The Compas recidivism algorithm used in US courts has double the false positive rate (people rated high risk who do not reoffend) for Black defendants compared to white